What students actually think about AI marking and feedback

King's School, Chester ran an independent student voice survey on TeachEdge after around 18 months of use. Here's what 148 students said about clarity, speed, progress, and trust in marking.

Quick Summary

- •Students rated clarity of feedback highest (91.2% agree/strongly agree).

- •Timely feedback scored strongly (84.4%), which matters for acting while questions are still fresh.

- •Trust in marking accuracy was the toughest item, but still positive (78.4%).

- •These results come after sustained use (around 18 months), not a one-off novelty demo.

- •Independent surveys like this move the AI-in-schools conversation beyond hype and fear.

King's School, Chester recently shared something I wish more schools did.

Stephen Walton (Head of Business and Economics) ran a student voice survey asking students what they actually think about AI marking and feedback after using TeachEdge for around 18 months.

A key point up front: TeachEdge had no involvement in designing, administering, or collecting this survey. Stephen and the school ran it independently and shared the results with us afterwards.

There were 148 student responses across GCSE and A Level Business and Economics.

The headline results

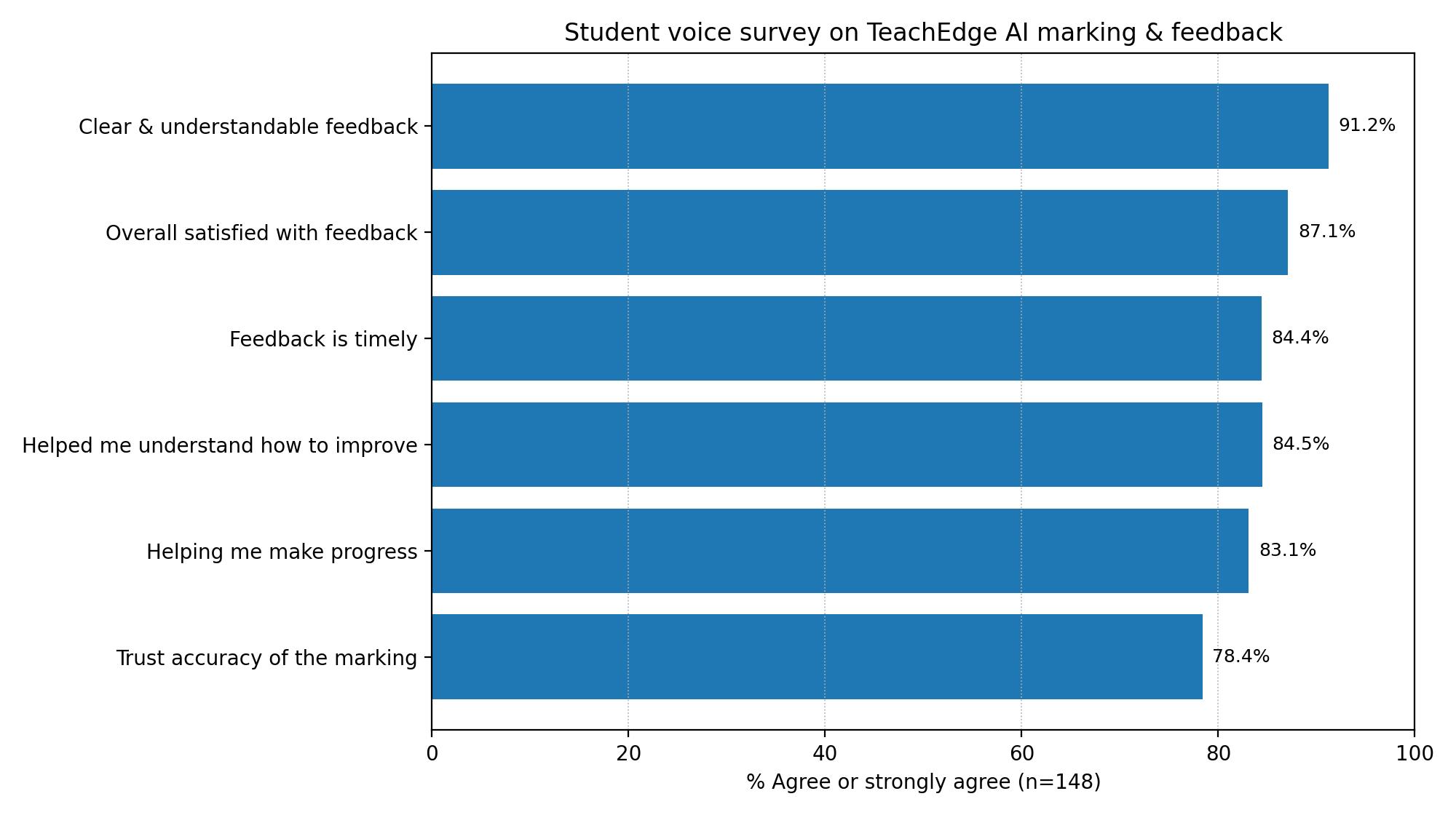

Across six statements, the proportion who agreed or strongly agreed was:

- 91.2% – "AI feedback is clear and understandable"

- 87.1% – "Overall I am satisfied by the AI feedback I have received"

- 84.5% – "AI feedback has helped me understand how to improve my answers"

- 84.4% – "AI feedback is timely"

- 83.1% – "AI feedback is helping me to make progress"

- 78.4% – "I trust the accuracy of the AI marking"

Figure 1: Student voice survey results from King's School, Chester (n=148): the percentage of students who agreed or strongly agreed with each statement.

Figure 1: Student voice survey results from King's School, Chester (n=148): the percentage of students who agreed or strongly agreed with each statement.

The bit I think matters most

Three things stand out.

1) Clarity is the biggest win

The strongest result is that students find the feedback clear and understandable.

That matters because "feedback" only helps if a student can actually use it under real exam pressure. Clarity beats quantity.

2) Speed is not just convenient, it changes behaviour

Timeliness came through strongly too.

Students often receive feedback when the class has already moved on. Fast feedback makes it more likely they will act on it while the question is still fresh.

3) Trust in accuracy is the hardest question, and it still landed well

The lowest score (though still strong) was trust in marking accuracy.

That makes sense. Students are rightly sceptical about any marking system, human or otherwise. If anything, the fact this was not 95% makes the survey feel more believable. Trust is earned slowly, especially with assessment.

Why the "18 months" detail matters

This was not a novelty effect.

These students were not reacting to a one-off demo lesson. They have had AI-supported feedback in their routine practice for well over a year. That is what makes this genuinely useful.

What we take from this (and what we'll keep improving)

For me, the message from students is pretty clear:

- Make feedback easy to understand

- Get it back quickly

- Be transparent about marking decisions

On our side, the work is ongoing, especially around trust and accuracy. We're not claiming perfection. What we are building is a workflow where AI does the heavy lifting, but teachers stay in control.

That includes:

- exam-board aligned prompts

- assessment objective breakdowns where relevant

- teacher review and editing before anything is released to students

Thank you

Finally, a genuine thank you to Stephen Walton for running the survey and for being happy to share the results publicly. It's generous, and it helps move the conversation about AI in schools onto something more useful than hype or fear.

Related Posts

What Makes Teach Edge Different from Other AI Marking Tools

Teach Edge goes beyond one-script-at-a-time AI marking. It supports original question generation, class assignments, Quick Mark, Bulk Marking Beta, teacher review, progress tracking and feedback workflows for real secondary classrooms.

Straight from the Source: What GCSE Business Students REALLY Think About AI Essay Feedback (and why it matters)

A GCSE Business teacher asked students for honest, unfiltered views on TeachEdge's AI feedback. The themes were clear: speed, detail, confidence — plus a few sharp suggestions on how we can improve.

Navigating AI Essay Marking and Feedback

AI can save hours on essay marking, but the real impact comes when a teacher reviews, tweaks, and stands behind the feedback. This post explains the 'human-in-the-loop' approach that makes AI feedback feel trustworthy and genuinely useful to students.

Ready to transform your marking workflow?